Introduction

Managing risks must be underpinned by scientifically credible risk assessments,[1] with numerous points of communication along the risk paradigm. Low-probability, high-consequence (rare) events present special challenges to risk communications. Scientific and engineering rigor are essential for rare events, as they are for any risk assessment scenario. Managing the risks presented by rare events requires many of the same fundamental communication elements of any credible risk-based decision analysis. Given the diversity of stakeholders and unconventional aspects of rare events, a greater understanding and application of numerous other factors are needed, especially psychosocial and ethical factors.

Definition of Risk

Decision quality and risk management foundationally require concise definitions to achieve sustainable consensus among decision makers. Unfortunately, the term “risk” has numerous definitions, depending on the context. For example, the International Organization for Standardization (ISO) defines risk as the effect of uncertainty on objectives and states that such effect can be either positive or negative.[2] A competing risk framework by the Committee of Sponsoring Organizations of the Treadway Commission (COSO) adopts a similar core definition but states “risks” are negative effects and “opportunities” are positive effects.[3] Lay people may concur that risk is associated with negative effects and not find the distinction very important; however, this is an example of differing priorities between technical professionals, including engineers, and their principal client (i.e. the public). For engineers and other technical professionals, the ISO 31000 definition underpins technical risk management and infrastructure project management. This presents a potential “sender-message-receiver” conflict. At the outset, the technical professionals communicate what they perceive to be an accepted interpretation of risk, but is misperceived by their intended audience (i.e. the lay public’s perspective is that risk is exclusively the domain of adverse outcomes).

Actually, unanimity in defining risk does not even exist within the technical communities. In the engineering community, risk is most frequently expressed by what is commonly known as the risk equation, which is the multiplicative product of the estimated numerical value for consequences of failure and the estimated numerical value for likelihood of failure. Within the environmental and public health disciplines, risk is expressed as a probability of an adverse outcome, defined as a quotient of hazard and exposure.[4] Most definitions of hazard are negative, so the environmental risk definition approaches that of the lay public’s understanding and assessment of risk (i.e. exclusively negative).

Within the public safety and security community, risk is often understood to be synonymous with vulnerability, which is expressed as the multiplicative product of the consequences of failure, the hazard rate, and the likelihood of experiencing the hazard. Technical professionals often say any of these methods define “risk.” Rather, these and many other methods are simply ways to express risk. Risk is the effect of uncertainty on objectives, and to most laypeople that means the negative effect (loss).

The Taxonomy of Risk Communication

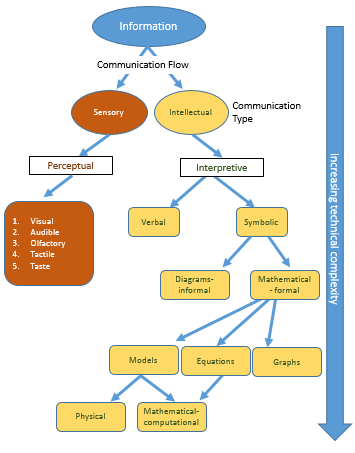

Successful communication is analogous to the signal-to-noise ratio (SNR) in electronics. When the SNR is large, the sender’s message is properly received. However when SNR nears zero, communication of the sender’s message has failed. Using this SNR analogy and assuming the technical professional or risk manager is conveying correct information, the breakdown between the signal generator occurs during message delivery and is not the fault of the receiver. For example, engineers often prefer to communicate symbolically, using mathematics and diagrams (Figure 1; bottom right); whereas, other professionals (e.g. lawyers and policy makers), also communicate intellectually and interpretively (Figure 1; right side) but more verbally. Much of the lay public, however, communicates perceptively (Figure 1; left side). Thus, rejection of a technical sound proposal may result from miscommunication of the idea by the generator rather than from disagreement with the proposed action.

Rare events continue to unfold after the initial event. The chain of secondary events following the original disasters (e.g. oil spills, hurricanes, tsunamis, earthquakes, etc.) not only prolong the impact of the disaster but introduce new risks. Structural damage propagates safety, environmental, and public health threats and vulnerabilities. Thus, the public may initially accept a plan only to oppose it once they to see, smell, and hear its progress (Figure 1; left side). In this case, the risk communication SNR is large for planning, but diminishes during implementation. This can be exacerbated in widespread events where responses must occur in stages, beginning with triage.[5] This illustrates that risk communication is dynamic and in need of continuous updates.

Types of communication. All humans use perceptual communication, such as observing body language or smelling an animal feeding operation. The right side of the figure is the domain of technical communication. Thus, the public may be overwhelmed by perceptive cues or may not understand the symbolic, interpretive language being used by an engineer. Thus, the type of communication in a scientific seminar is quite different from that of a public meeting or a briefing for a patient or client.[6]

System Hysteresis and Resiliency

One of the key means of expressing damage to any system is the extent to which it has deviated from a desired state and its inability to return to that state (i.e. hysteresis in its broadest sense). The precautionary principle, for example, deems a risk to be unacceptable if it is severe (i.e., deviated from the desired state) and irreversible (i.e., unable to return to the desired state).[7] Conversely, resiliency is commonly defined as the ability to return to the original form or state after being after being bent, compressed, or stretched or the ability to return to the desired state readily following the application from some form of stress such as illness, depression, or adversity. Consistent with the US Environmental Protection Agency’s (USEPA) growing focus on adaptive management, resilience is further defined as the capacity for a system to survive, adapt, and flourish in the face of turbulent change and uncertainty.[8]

Resiliency is a key concept when considering and communicating risk related to rare events. Unlike regularly or normally occurring events, where reliability can be considered as a dominating concept to prevent occurrence, the nature of rare events by definition makes them unexpectantly and disproportionately impactful. Making systems more resilient to low-probability, high-consequence events addresses their actual survival and mitigates the psychological affects when they do occur.

The Psychology of Rare Events

Unlike many risk managers, engineers, and other technical professionals, social scientists have a long-held belief that risk is more subjective than objective. “Risk does not exist ’out there,’ independent of our minds and cultures, waiting to be measured.”[9] Instead, risk is a concept human beings invented to help understand and cope with the dangers and uncertainties of life. Social scientists would say, although these dangers are real, there is no such thing as “real risk” or “objective risk. “Subjective judgments are made at every stage of the assessment process, from the initial structuring of a risk problem to deciding which endpoints or consequences to include in the analysis, identifying and estimating exposures, choosing dose-response relationships, and so on.[10]

Risk perceptions and risk attitudes play a large role in the psychology of risk, risk treatment, and risk communications. One finding is that laypeople typically prefer to have more governmental regulation when confronted with risks, which are deemed to be beyond their control, catastrophic, or whose consequences have an inequitable effect. On the other hand, a more laisse faire attitude results when risks are perceived as unnoticeable, longer term, or new.

Danger is real, but risk is socially constructed.[11] To many social scientists and psychologists, risk analysis, risk treatment, and associated communications are a blending of science with social, cultural, and political factors. And those who hold power also control the communication; winners write history.[12] This is particularly true in the case of rare events.

An example of the scenario discussed in the aforementioned paragraph could be climate change. Climate change is considered a rare event, and therefore risk communication requires a four-fold approach: scientists and engineers to attest to its accuracy; decision scientists to attest to its relevance; social scientists to attest to its clarity; and communication designers to attest to its format. The accuracy, tone, and comprehensibility of any communication can be undermined if any of these four cannot be attested.[13]

The Role of Ethics

Ethics can significantly affect the direction and shape of the communication of technical information. A traditional approach groups ethics into three main categories: virtue ethics, consequential ethics, and deontological ethics. Virtue ethics describes the character of a moral agent (right and wrong, good and bad) as a driving force, and is used to describe the ethics of Socrates, Aristotle, and other early Greek philosophers. Consequential ethics refers to moral theories that hold that the consequences of a particular action form the basis for any valid moral judgment from a consequential ethics standpoint, a morally “right” action is one that produces a good outcome or consequence—in other words “the ends justify the means.”

Deontological ethics is an approach to ethics that determines “goodness” or “rightness” by examining acts, or the rules and duties that the person doing the act strove to fulfill. Immanuel Kant’s theory of ethics is considered to exemplify deontological ethics.[14] Deontological ethics holds that the consequences of actions do not make them right or wrong, rather the motives of the person who carries out the action make the actions right or wrong. Deontological ethics is in direct contrast to consequential ethics, and places priority on full disclosure and “treating others in the manner in which you would wish to be treated.”

The legal requirements and code of ethics for many professions, namely engineers and physicians, are aligned to deontological ethics theory. Of the seven fundamental canons of ethics for engineers, three are of particular interest to technical presentations. The first and foremost is engineers must hold paramount the safety, health, and welfare of the public. A second canon is engineers shall strive to comply with the principles of sustainable development in the performance of their professional duties. A third canon is engineers shall issue public statements only in an objective and truthful manner.[15]

The term “hold paramount” in the first canon means “definitively above all others.” It is a high standard among the universe of professions and their related ethical standards. Such a canon is important for engineers because it means the order of priority for the public communication of technical information is first and foremost the public good, next the interest of their clients and employers, and finally their personal good. The same cannot be said for all professions, including the general categories of researchers, academics, and scientists. A number of experts and organizations have called for a universal code of ethics from different fields, most recently biomedical engineering and related research.[16]

Engineers and technical professionals often embrace consequential ethics (i.e. solving problems calls for a results-orientation). Interestingly, engineering codes of ethics are predominantly deontological, stressing and enforcing engineering duties. Thus, engineering communication must be effective (sufficiently precise, accurate, and representative) and honest (the public deserve the truth at all times). These may at times appear to be conflicting roles, since the public may not appreciate what may well be the most technically sound approach.

Case Example: Community Resilience Project[17]

The City of Wilmington, North Carolina, in partnership with New Hanover County and the Cape Fear Public Utility Authority (CFPUA), requested federal assistance to identify land use and infrastructure policy options to reduce the vulnerability of water and wastewater infrastructure to potential sea level rise (SLR) scenarios. The USEPA’s Office of Sustainable Communities, the Federal Emergency Management Agency (FEMA) and the National Oceanic and Atmospheric Administration (NOAA) worked with the City of Wilmington to develop a scope of work to guide assistance.

The SLR and storm surge form more intense coastal storms, which pose challenges to existing water and wastewater infrastructure in the service area of CFPUA, throughout the City of Wilmington, and in unincorporated areas of New Hanover County. Much of the area is low-lying, and water and wastewater infrastructure, including underground pipelines, pump stations, treatment facilities, and groundwater resources, are potentially vulnerable to rising sea levels and storm surge. For example, older pipes made of iron, steel, or concrete, when exposed to salt water, may corrode, deteriorate, or fail at an accelerated rate, which could potentially cause a disruption of service to the public, negatively impacting public health and water quality. As water levels rise into low-lying areas, access to infrastructure may be compromised, impeding maintenance and repair work or halting services completely. To enhance resilience to potential changes in sea level, for existing and future water and wastewater infrastructure, a combination of land use and infrastructure policy options will provide the greatest collective potential for improved resilience.

The project was complicated by legislation recently passed in the North Carolina General Assembly mandating that a recent SLR projection developed by The North Carolina Coastal Resources Commission (CRC) could not be used for land use planning and zoning until a more detailed assessment could be performed. The report developed by CRC’s Science Panel on Coastal Hazard reviewed a number of SLR studies and historical tide gage data to project the potential rise for the North Carolina coast. The projections of potential SLR ranged from 40 cm (1.3 feet) to 140 cm (4.6 feet) by 2100.[18] A rise in sea level of this magnitude has significant implications for the North Carolina coast.

From a conservative perspective, SLR is typically considered a low-probability, high-consequence event. The projections also supported this perception in the fact that nearly 75 percent of the projected SLR occurred in the period beyond 30 years due to the uncertainties contained in the models. The issues related to communications of low-probability, high consequence events, and the resiliency of public infrastructure, were driving themes of the assessment.

A number of communication strategies associated with the special case of low-probability, high-consequence events were used for the development and implementation of this project. To meet the previously discussed technical and ethical requirements for technical communications, these strategies included:

- Facilitated workshops with diverse groups of participants;

- Geospatial representations of the effects of different scenarios;

- A framework to guide participants through the development and implementation process;

- A series of adaptive management strategies, whereby all potentially impacted sectors could take some form of positive action in incremental steps; and

- A recommendation that an on-going forum be established to continuously monitor data and perceptions to maintain visibility.

General Adaptive Management Framework Steps for an SLR Application.[19]

Conclusions

Communicating rare events requires a systematic and comprehensive set of communication strategies built from credible physical science and engineering, but enhanced with the social sciences, ethics, and targeted communications. It is a special case of risk management in general and the necessary decision analysis that accompanies it. Unfortunately, most technical professionals receive inadequate training in the full range of communications, psychology, and ethics that are required to successfully deal with this issue.

This paper provides a number of key observations and some best practices associated with better communication of rare events. This paper is at best a start, and there are a number or resources and literature available for additional consideration. The ultimate responsibility lies with the technical professional as the sender of the communication to ensure that it is sufficiently received.

JD Solomon, PE, CRE, CMRP is a Vice President with CH2M and serves as a senior consultant focusing on maintenance & reliability, asset health management & prognostics, financial management, strategic decision making, and master planning. He is a Certified Reliability Engineer (CRE), Certified Maintenance and Reliability Professional (CMRP), is certified in Lean Management, and is a Six Sigma Black Belt. JD has a Professional Certificate in Strategic Decision and Risk Management from Stanford, an MBA from the University of South Carolina, and a BS Civil Engineering from NC State. He currently serves as a gubernatorial appointee on North Carolina’s State Water Infrastructure Authority (SWIA) and the state’s Blue Ribbon Commission to Study the Building and Infrastructure Needs of North Carolina. He also concurrently serves by appointment of NC House Speaker Tim Moore on the state’s Environmental Management Commission (EMC).

Dan Vallero is an internationally recognized expert in environmental science and engineering. For four decades, he has conducted research, advised regulators and policy makers, and advanced the state-of-the-science of environmental risk assessment, measurement and modeling. He is the author of thirteen textbooks addressing pollution engineering, environmental disasters, biotechnology, green engineering, life cycle analysis and waste management. At Duke University, Vallero has led the Engineering Ethics program, a popular and innovative program that introduces students to the complex relationships between professional, scientific, technological and societal demands on the engineer. He teaches courses in air pollution, sustainable design and green engineering, and ethics.

Dr. Vallero holds a Ph.D. in Civil and Environmental Engineering from Duke University, a Masters in Environmental Health Sciences (Civil and Environmental Engineering) from the University of Kansas, a Masters in City and Regional Planning from Southern Illinois University, and a Bachelors Degree in the Earth Sciences and Psychology from SIU.

References

[1] National Research Council, Risk Assessment in the Federal Government: Managing the Process, (Washington, D.C.; National Academy Press, 1983), available at http://www.nap.edu/read/366/chapter/1.

[2] ISO, 31000: 2009 Risk Management—Principles and Guidelines, ISO, http://www.iso.org/iso/home/standards/iso31000.htm.

[3] Committee of Sponsoring Organizations of the Treadway Commission (COSO), Enterprise Risk Management—Integrated Framework: Executive Summary, COSO (Sept. 2004), available at http://www.coso.org/documents/coso_erm_executivesummary.pdf.

[4] Daniel A. Valero, Environmental Biotechnology: A Biosystems Approach, (Cambridge, MA: Academic Press, 2015); Daniel A. Valero, Exposure Space: Integrating Exposure Data and Modeling with Toxicity Information, Paper presented at OpenTox USA 2015, Feb. 11, 2015, Balitmore, MD.

[5] Daniel A. Vallero and Paul J. Lioy, “The 5 Rs: Reliable Postdisaster Exposure Assessment,” Leadership and Management in Engineering 12, no. 4 (Oct. 2012), http://ascelibrary.org/doi/abs/10.1061/(ASCE)LM.1943-5630.0000200.

[6] T. R. G. Green, “Cognitive Dimensions of Notations,” in People and Computers V, ed. A. Sutcliffe and L. Macaulay (Cambridge, UK: Cambridge University Press, 1989), 443-60; Margaret Myers and Agnes Kaposi, A First Systems Book: Technology and Management, (London: Imperial College Press, 2004); Daniel A. Vallero, Biomedical Ethics for Engineers, (Cambridge, MA: Academic Press, 2007).

[7] Robin Attfield, “Engineering Ethics, Global Climate Change, and the Precautionary Principle,” in Contemporary Ethical Issues in Engineering, ed. Satya Sundar Sethy (Hershey, PA: IGI Global, 2015).

[8] Joseph Fiksel, Iris Goodman, and Alan Hecht, “Resilience: Navigating toward a Sustainable Future,” Solutions 5, no. 5 (Oct. 2014), 38-47.

[9] Paul Slovic and Elke U. Weber, “Perception of Risk Posed by Extreme Events,” Presented at the conference “Risk Management Strategies in an Uncertain World,” Palisades, NY, Apr. 12-13, 2002, available at https://www.ldeo.columbia.edu/chrr/documents/meetings/roundtable/white_papers/slovic_wp.pdf

[10] Ibid.

[11] Paul Slovic, “Trust, Emotion, Sex, Politics, and Science: Surveying the Risk-Assessment Battlefield,” Risk Analysis 19, no. 4 (1999), available at http://chicagounbound.uchicago.edu/cgi/viewcontent.cgi?article=1224&context=uclf.

[12] Analogous to the statement that “history is written by the victors,” attributed to Machiavelli, Winston Churchill, Walter Benjamin, and others.

[13] Baruch Fischoff, “Nonpersuasive Communication about Matters of Greatest Urgency: Climate Change,” Environmental Science & Technology 41, no. 21 (Nov. 1, 2007), 7204-7208, available at https://www.cmu.edu/dietrich/sds/docs/fischhoff/NonpersuasiveCommMatters.pdf.

[14] Immanuel Kant, Grounding for the Metaphysics of Morals (1785).

[15] American Society of Civil Engineers, “Code of Ethics,” ASCE, July 23, 2006, http://www.asce.org/code-of-ethics/.

[16] Daniel A. Vallero and P. Aarne Vesilind, Socially Responsible Engineering: Justice in Risk Management (Hoboken, N.J.: Wiley, 2007).

[17] CH2MHill, Community Resilience Pilot Project: Wilmington, North Carolina, Prepared for U.S. Environmental Protection Agency (Feb. 2013), available at http://www.wilmingtonnc.gov/Portals/0/documents/Development%20Services/Environment%20and%20Historic%20Preservation/Environmental/EPA_Community_Resilience_Pilot_Project_Report_02-28-13_FINAL.pdf.

[18] North Carolina Coastal Resources Commission Science Panel on Coastal Hazards, North Carolina Sea-Level Rise Assessment Report (Raleigh, N.C.: North Carolina CRC, 2010), available at http://www.ncleg.net/documentsites/committees/LCGCC/Meeting%20Documents/2009-2010%20Interim/March%2015,%202010/Handouts%20and%20Presentations/2010-0315%20T.Miller%20-%20DCM%20NC%20Sea-Level%20Rise%20Rpt%20-%20CRC%20Science%20Panel.pdf.

[19] Community Resilience Pilot Project, 1-7.